Unraveling the emergence of collective behavior in networks of cognitive agents

Abstract

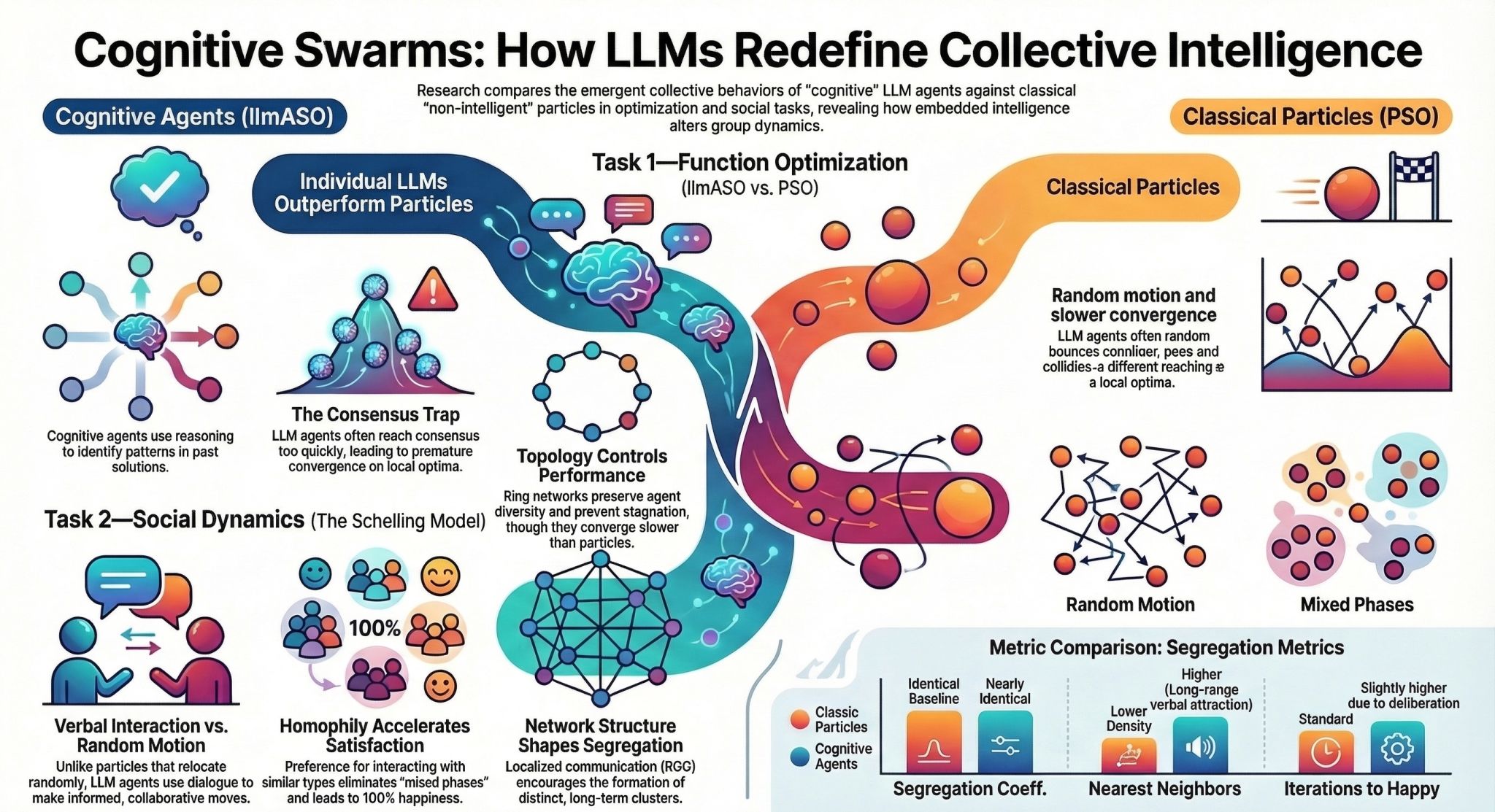

Cognitive agents, powered by Large Language Models (LLMs), possess advanced reasoning and communication capabilities that fundamentally distinguish them from non-cognitive particles, which rely solely on formal rules. While their ability to replicate human individual and social behaviors is still under scrutiny, the impact of their embedded “intelligence” on emergent behaviors remains poorly understood. Here, by comparing cognitive agents with classic particles, we investigate how LLM capabilities shape emergent phenomena in two tasks: function optimization and social organization emerging from the Schelling model of segregation. To this aim, we introduce LLM Agent Swarm Optimization (llmASO), where a swarm of interacting LLM agents acts as an optimizer. Our findings reveal that, while individual agents outperform particles in decision-making, their consensus tendencies and ability to exploit patterns can make them prone to premature convergence. Adjusting network topology can alleviate this effect, but typically at the cost of slower overall convergence compared to classical Particle Swarm Optimization (PSO). In contrast, in the Schelling model, we demonstrate that local interactions and homophilic mechanisms allow cognitive agents to generate distinct emergent behaviors, underscoring the importance of realistic communication architectures in complex social simulations. This work clarifies how LLM capabilities introduce new mechanisms for collective behavior and has implications for future applications of LLM agents in swarm robotics, social experiments, and complex decision-making tasks.